The Part Most Developers Underestimate

A developer who passes the technical screen still loses the offer more often than people think.

In a 10-year record of conducting roughly 1,000 interviews at Amazon, principal engineer Steve Huynh documented a consistent pattern: the technical bar was cleared by many candidates, but the behavioral interview became the real filter. Interviewers weren't looking for polish or perfect vocabulary — they were looking for something harder to fake: evidence that a candidate thinks clearly under pressure, takes ownership of outcomes, and can work with people they've never met.

For LATAM developers interviewing with US or Canadian companies, behavioral interviews add a layer that purely technical preparation doesn't cover. Language, professional communication norms, and the way people are expected to talk about themselves in North American work culture are all different from what many developers in Colombia, Brazil, Mexico, Argentina, or Peru have encountered in local hiring processes.

That gap is real, and it's worth understanding before you start practicing answers.

What a Behavioral Interview Actually Tests

A behavioral interview uses structured questions to surface past behavior as a proxy for future performance. The underlying logic, developed through decades of industrial-organizational psychology research, is that how someone acted in a previous situation predicts how they'll act in a similar one. It's not a hypothetical assessment — it's a retrospective one.

This is why behavioral interviews are evaluated so differently from technical interviews in South America — the technical screen tests what you know; the behavioral screen tests how you work.

Research consistently supports this approach. Schmidt and Hunter's 1998 meta-analysis, published in Psychological Bulletin and still one of the most cited studies in personnel selection, found that structured interviews — including behavioral ones — were among the most valid predictors of job performance available to hiring teams. Unstructured "conversational" interviews, by contrast, showed significantly lower validity. US tech companies adopted structured behavioral interviewing at scale partly because of this evidence base.

What interviewers are measuring when they ask behavioral questions typically falls into a few categories:

Ownership and accountability. Did you identify a problem and take steps to solve it, or did you wait for someone else to fix it? US employers, especially in distributed teams, place high value on people who move without being pushed.

Communication under ambiguity. Can you articulate a complex situation clearly? Can you explain your reasoning to someone who doesn't share your technical context?

Collaboration and conflict. How do you work with people who disagree with you? How have you navigated a difficult relationship with a stakeholder or teammate?

Judgment and decision-making. When you had incomplete information, how did you decide what to do? What happened, and what did you learn?

These aren't soft skills that can be answered with generalities. Interviewers are trained to probe for specifics: What did you specifically do? What was the actual outcome? What would you do differently?

Why LATAM Developers Sometimes Struggle — And Why It's Not About Skill

There are structural reasons why behavioral interviews feel more disorienting for many developers in Latin America than the technical screen does.

Professional storytelling norms are different. In many LATAM work cultures, speaking at length about your individual contribution can feel uncomfortable — close to bragging, or disrespectful to the team. The instinct is to say "we did X" or "the team solved it." North American behavioral interviews expect the opposite: the first-person narrative of what you specifically did, decided, and contributed. This isn't a cultural defect — it's a difference. But developers who aren't aware of it often undersell themselves badly.

English proficiency at the professional level varies significantly by country. According to the EF English Proficiency Index 2025, which ranks 123 countries based on test data from 2.2 million adults, LATAM countries range widely across the spectrum. Argentina ranks 26th globally with "high" proficiency. Uruguay (34th), Paraguay (43rd), and Peru (52nd) fall in "moderate" territory. Colombia (76th) and Brazil (75th) are rated "low proficiency." Mexico ranks 103rd globally, in the "very low" band.

This variation shapes the hiring environment in each market. The dynamics look different when you hire developers in Colombia compared to when you hire developers in Brazil, or when you hire developers in Mexico, Peru, or Argentina — and those differences show up in how candidates perform under the specific pressure of an English-language behavioral interview.

These are national averages, and the distribution within any country matters a lot — developers at tech companies in Bogotá or São Paulo typically have significantly stronger English than the population average. But it does mean that for a meaningful share of candidates from lower-ranked markets, the behavioral interview is happening in a language where they're less fluent than in the technical screen. Code compiles regardless of accent. Behavioral responses need to land in a second language under pressure.

The broader software developers in Latin America market is technically strong and growing rapidly — but the cross-border communication gap remains one of the most consistent friction points in the hiring process.

The STAR structure is not intuitive. Most developers in LATAM have never been trained in it. The Situation–Task–Action–Result framework is a widely used response structure that keeps behavioral answers focused, specific, and time-bounded. Without it, answers often run long, lose the thread, or land on the outcome before explaining the context. Interviewers lose confidence not because the story was bad, but because they couldn't follow it.

The STAR Method: A Brief Practical Guide

STAR stands for:

Situation — Set the context. What was the project, team, or challenge? Keep this short — one to two sentences.

Task — What was your specific responsibility or goal in that situation?

Action — What did you do? This is the longest part. Be specific. Use "I" not "we." Explain your reasoning, not just your actions.

Result — What happened? Quantify where you can. What did you learn?

A common mistake is spending too much time on Situation and not enough on Action. Interviewers want to understand how you think and behave, not just what environment you were in. If your answer is 60% context-setting, you've spent the interview on the least important part.

Another mistake is giving a result that's vague or non-committal: "things improved" or "the team was happy." Interviewers note specificity as a signal of actual ownership. "We shipped two weeks early" or "the bug rate dropped by 40% in the next sprint" is more credible than a general positive statement.

One more: avoid answers where everything went perfectly. Interviewers are trained to notice when someone only presents successes. Understanding candidate vetting and screening from the employer side makes this clearer — experienced interviewers are specifically looking for how you handle failure, not just success.

Common Behavioral Questions and What They're Actually Testing

These questions appear frequently in US tech company interviews. The phrasing changes, but the intent is consistent.

"Tell me about a time you disagreed with a decision your team or manager made." This tests whether you can hold a position, express it constructively, and also commit to a team outcome even when you didn't get your way. The worst answers either show no real disagreement ("I've always agreed with my managers") or show unresolved conflict. The strong answers show that you raised your concern clearly, explained your reasoning, listened to the counterargument, and then either changed your mind because the counterargument was better, or committed to the direction anyway.

"Describe a situation where you had to work with incomplete requirements or information." This tests comfort with ambiguity — highly valued in distributed teams where you can't always tap someone on the shoulder. The answer should show that you identified what you knew and didn't know, made reasonable assumptions explicitly, communicated them, and moved forward rather than stalling.

"Tell me about a time a project didn't go as planned." This tests accountability and resilience. It's a mistake to assign blame externally — to a late vendor, a bad requirement, or a difficult teammate. The answer should show what you did when things started going wrong, what you communicated, and what you took away from the experience.

"Give an example of when you had to learn something quickly to complete a task." Particularly relevant for candidates from smaller tech ecosystems, this question is about learning velocity — not knowledge coverage. Interviewers want to see a genuine example of learning under time pressure, not just a statement that you're a fast learner.

"How do you assess a candidate's competencies beyond technical skills?" If you're a hiring manager rather than a candidate reading this, understanding the best tools for technical assessment of software developers gives you the full picture of how structured evaluation works on both sides of the interview.

The English and Cultural Fit Dimension

For developers interviewing with US and Canadian companies, the interview is also an assessment of how you'll function on a distributed English-speaking team — even if the job description doesn't say so explicitly.

This doesn't mean you need to sound American. It means a few specific things:

Can you follow and contribute to async written communication in English? Most US-distributed teams rely heavily on Slack, pull request comments, Notion docs, and email. The ability to write clearly in English matters at least as much as speaking it. Understanding how to manage distributed engineering teams helps contextualize why written async communication is treated as a first-class skill.

Can you ask clarifying questions without losing your listener? "Can you give me a moment to think?" or "Could you clarify what you mean by X?" are signals of thoughtful communication. Freezing silently, or pretending you understood when you didn't, creates problems in the actual job.

Do you demonstrate familiarity with how remote teams operate? Knowing the cadences, norms, and communication patterns of US-based engineering teams — sprint reviews, async standups, documentation culture — signals that you won't need a long ramp. Reading about best practices for effective meetings with distributed teams is practical preparation, not just background reading.

Do you demonstrate curiosity about the company and role? North American interview culture generally expects candidates to ask thoughtful questions at the end. Saying "no, I think you covered everything" is read as a lack of interest or preparation. Three or four genuine questions about the team, technical challenges, or the role's growth path signal engagement.

On cultural fit specifically: The concept of "culture fit" in US tech hiring is often overused — and occasionally a cover for bias. But what it legitimately points to is whether you can communicate directly, give feedback to peers, and raise issues without waiting for permission. These are real operational expectations in flat, remote-first teams. Understanding this can help you frame your behavioral answers more effectively.

What Strong Behavioral Answers Look Like: Examples

Weak answer: "I always try to communicate well with my team and keep everyone updated. I think that's one of my strengths."

This says nothing. It's a self-assessment, not a story.

Strong answer: "In my last role, we were midway through a sprint when I realized the third-party API we were integrating had changed its authentication spec. I flagged it in our standup, explained the risk to our delivery timeline, and proposed two options: we could push back the feature two days to rebuild cleanly, or we could implement a temporary workaround and pay technical debt later. My lead preferred the workaround due to the client deadline. I implemented it, documented it in Jira, and two sprints later I led the cleanup. The client shipped on time and we resolved the debt before it caused issues."

This is a real situation, a real decision, a real outcome. The interviewer can evaluate how this person thinks.

How BetterWay Devs Sees This From the Recruiting Side

After 16 years of nearshore developer hiring across Latin America, the pattern is consistent.

The developers who move through full-cycle recruiting processes most successfully are rarely the ones with the best technical CVs. They're the ones who can translate their experience into a story that makes sense to a hiring manager in another country, in another language, under the specific conditions of a structured behavioral interview.

That's a learnable skill. The gap isn't talent — it's preparation and awareness of what the format actually requires.

The LATAM developer market is large and technically strong. According to GitHub's Octoverse data, the region's developer community spans an estimated 7–8 million active engineers across Brazil, Argentina, Colombia, Mexico, and the rest of the region. Most of those developers have never received structured preparation for behavioral interviews with North American companies. Building that talent pipeline development — from sourcing to successful offer — is where preparation gaps have the most measurable impact.

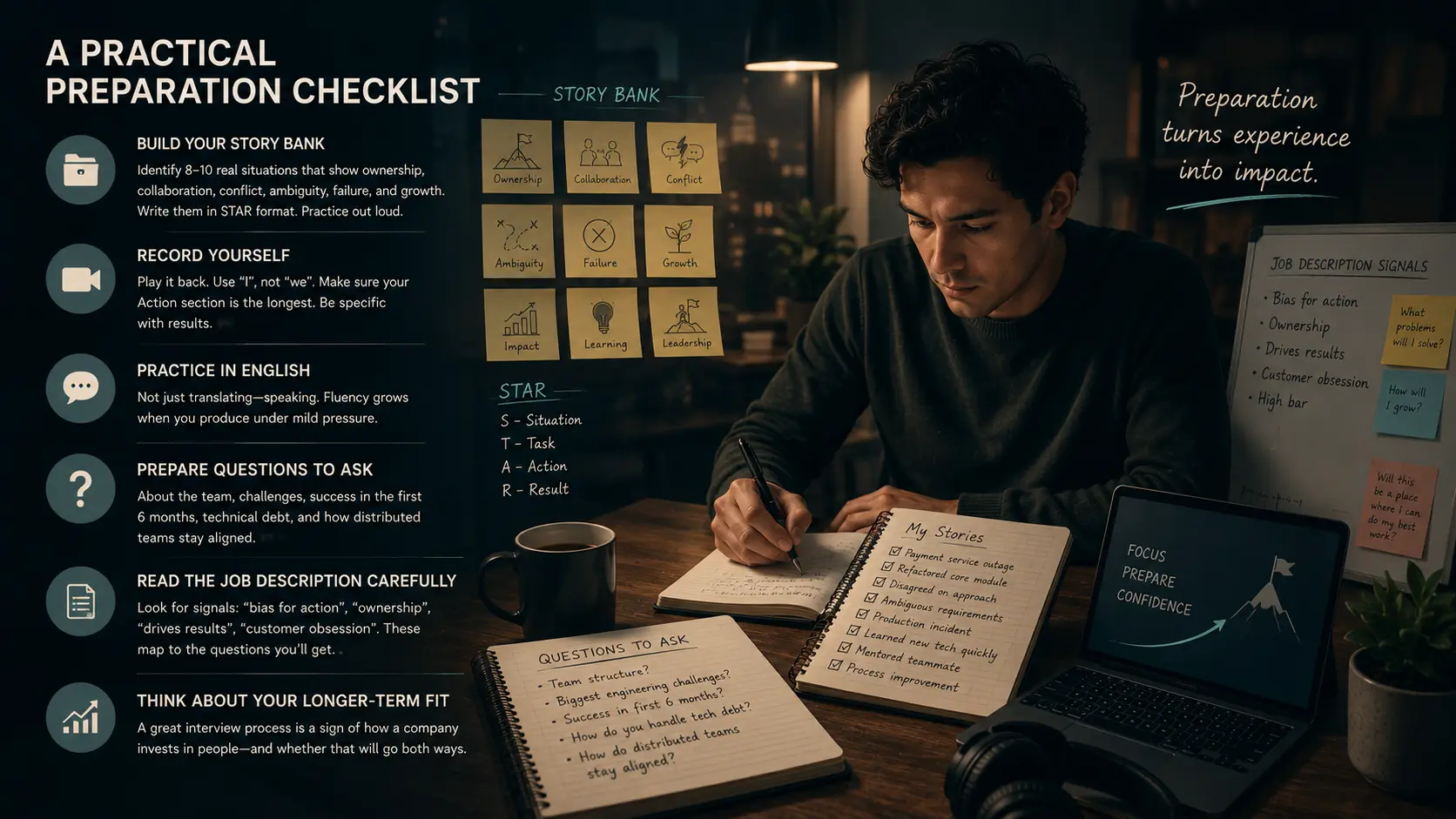

A Practical Preparation Checklist

Before your next behavioral interview with a US or Canadian company:

Build your story bank. Identify eight to ten real situations from your work history that illustrate ownership, collaboration, conflict, ambiguity, failure, and growth. Write them in STAR format. Practice them out loud — not just in your head.

Record yourself. Play it back. Evaluate whether you're using "I" or "we" too much (most developers use "we" too much). Check whether the Action section is the longest section. Notice whether your result is specific.

Practice in English. Not just translating — speaking. The fluency gap doesn't close by reading in English; it closes by producing in English under mild pressure. Understanding how to hire remote developers from the company's side helps you understand what English fluency actually looks like at the team level — and what threshold hiring managers are evaluating against.

Prepare five questions to ask. About the team structure, the engineering challenges they're facing, what success looks like in the first six months, how they handle technical debt, how distributed teams stay coordinated. Ask questions that show you've thought about the role.

Read the job description carefully. US job descriptions often signal which behavioral competencies will be tested. Phrases like "bias for action," "ownership," "drives results," "customer obsession" map directly to the types of behavioral questions you'll face.

Think about your longer-term fit. Companies that invest in structured interview processes also tend to invest more in retaining top talent and career development. The interview is the first signal of whether that investment will go both ways.

Frequently Asked Questions

What is a behavioral interview in software engineering?

A behavioral interview is a structured conversation in which interviewers ask candidates to describe specific past situations to assess how they've handled challenges, decisions, and interpersonal dynamics. Unlike technical interviews, behavioral interviews focus on judgment, communication, collaboration, and ownership rather than code or algorithms.

Why do US tech companies use behavioral interviews?

Research in personnel selection, including the widely cited 1998 meta-analysis by Schmidt and Hunter in Psychological Bulletin, found that structured behavioral interviews are among the most valid predictors of job performance available to employers. US tech companies — particularly those with distributed teams — use them to assess whether a candidate will be effective in a remote, cross-functional environment.

What is the STAR method?

STAR stands for Situation, Task, Action, Result. It's a response structure for behavioral interview questions that keeps answers focused, specific, and time-bounded. The Action section — what you specifically did — should be the longest and most detailed part of the answer.

Why is behavioral interviewing harder for LATAM developers than the technical screen?

Several factors compound: professional storytelling norms differ (team-first framing is common in LATAM cultures, whereas US behavioral interviews expect first-person narratives); English proficiency varies significantly across the region (according to the EF EPI 2025, countries range from "high" to "very low" on the index); and the STAR structure is rarely taught explicitly in LATAM hiring contexts.

What English level is needed to pass a behavioral interview with a US tech company?

There is no fixed benchmark, but candidates generally need enough fluency to explain a technical situation clearly, respond to follow-up questions, and communicate nuance in real time. The EF EPI classifies this roughly as the B2–C1 range on the CEFR scale. More important than accent or vocabulary breadth is the ability to be precise and direct — qualities that also matter in the job itself.

What questions should LATAM developers ask at the end of a behavioral interview?

Questions that demonstrate preparation and genuine interest in the role: how the team operates across time zones, what the biggest technical challenges are, how the company handles engineering disagreements, what growth looks like for someone in this role, or what a successful first 90 days looks like. Avoid questions whose answers are obvious from the job posting.

Are behavioral interviews the same at all US companies?

The format is broadly similar, but the specific competencies emphasized vary. Companies with explicit leadership principles (like Amazon) use behavioral interviews tightly aligned to those principles. Startups may be more conversational. The STAR method and first-person storytelling are useful preparation for both.

If you're a software developer in Latin America preparing to interview for a role with a US or Canadian company — or if you're a hiring manager trying to understand what the process looks like from the candidate's side — BetterWay Devs has been navigating this space for over 16 years.

We work with developers across 10+ countries in the region and help both candidates and clients understand what the cross-border hiring process actually involves. You can browse active developer profiles at candidates.betterway.dev or reach out directly if you're evaluating LATAM talent for a specific role.

Sources

- https://newsletter.pragmaticengineer.com/p/learnings-from-conducting-1000-interviews

- Schmidt, Frank L. and Hunter, John E. "The Validity and Utility of Selection Methods in Personnel Psychology: Practical and Theoretical Implications of 85 Years of Research Findings." Psychological Bulletin, Vol. 124, No. 2, 1998. — One of the most-cited meta-analyses in personnel selection research.

- EF Education First. EF English Proficiency Index 2025 (14th edition). Rankings of 123 countries by English proficiency based on 2.2 million adult test-takers. — ef.com/wwen/epi/

- GitHub. Octoverse 2022: The State of Open Source. Data on developer community size by region and country. — octoverse.github.com

- McDaniel, M.A., Whetzel, D.L., Schmidt, F.L., & Maurer, S.D. "The Validity of Employment Interviews: A Comprehensive Review and Meta-Analysis." Journal of Applied Psychology, 79(4), 1994. — Supporting research on structured vs. unstructured interview validity.

.png)

.png)